Introduction

Choosing the wrong AML transaction monitoring vendor doesn't just create operational headaches—it can result in regulatory penalties, exam failures, and alert backlogs that overwhelm compliance teams. Consider TD Bank's record $1.3 billion FinCEN penalty in October 2024, which stemmed directly from failing to monitor trillions of dollars in transactions and artificially capping alerts to match staffing levels.

That outcome wasn't just a staffing failure—it was a vendor and program failure. This guide gives fintechs, payments companies, and financial institutions a practical framework to evaluate AML transaction monitoring vendors beyond demos and marketing materials. It covers the questions that reveal real program risk, including:

- Alert quality and false positive rates

- Data integrity and ingestion reliability

- Model explainability and audit defensibility

- Total cost of ownership

TLDR

- Evaluating AML transaction monitoring software means going beyond feature lists—alert performance, data integrity, and vendor track record all determine real-world effectiveness

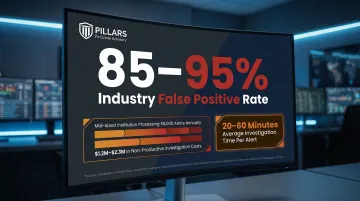

- High false positive rates (85-95% industry average) drain analyst time and increase operational risk

- Prioritize rules configurability, alert quality management, data freshness, integration capabilities, and vendor support when comparing platforms

- A vendor's implementation track record, regulatory update cadence, and post-sale support carry as much weight as the software features themselves

- Independent advisory support helps structure evaluations and run scenario testing before a costly procurement decision is made

What Is AML Transaction Monitoring Software?

AML transaction monitoring software analyzes customer transaction data—in real time or batch mode—to detect patterns, anomalies, and behaviors that may indicate money laundering, fraud, or other financial crimes. The system flags suspicious activity for analyst review or automated reporting.

The statutory foundation in the U.S. is the Bank Secrecy Act (BSA), with implementing regulations codified at 31 CFR Chapter X. The FFIEC BSA/AML Examination Manual sets the regulatory expectation that monitoring systems must be commensurate with the institution's overall risk profile.

Core Functions of AML Transaction Monitoring Platforms

Most platforms cover these core functions — but how well they execute each one is exactly what your vendor evaluation should probe:

- Rules-based alert generation — Flags transactions matching predefined criteria

- Behavioral analytics and risk scoring — Identifies anomalies based on customer behavior patterns

- Case management and investigation workflows — Organizes alert triage, documentation, and escalation

- SAR/STR filing support — Streamlines suspicious activity report preparation and submission

- Audit trail documentation — Maintains compliance records for regulatory examinations

Modern platforms increasingly use machine learning to supplement or replace static rule sets. AI-based detection can reduce false positives and surface complex typologies — but it requires proper calibration, human oversight, and ongoing tuning to perform effectively.

Regulators have been explicit about this. The April 2021 Interagency Statement confirmed that Federal Reserve SR 11-7 and OCC Model Risk Management guidance apply to BSA/AML systems, including those driven by AI and machine learning. Understanding these expectations shapes what you should demand from any vendor you evaluate.

What to Look for When Evaluating AML Transaction Monitoring Vendors

No two institutions face identical risk profiles. A fintech processing high-volume peer-to-peer payments has very different monitoring needs than a community bank or MSB. The evaluation factors below help compliance leaders connect vendor capabilities to their specific operational and regulatory requirements.

Rules Engine Flexibility and Configurability

Compliance programs must reflect the institution's specific risk appetite, customer segments, transaction types, and geography. A vendor whose platform requires IT involvement every time a threshold is adjusted creates compliance bottlenecks.

Ask vendors specifically:

- How are rule changes managed?

- How long do threshold adjustments take to implement?

- Can compliance teams modify rules without developer support?

Rule configurability directly affects alert relevance, investigator efficiency, and an institution's ability to demonstrate a documented, risk-based approach to regulators during exams.

Alert Quality and False Positive Management

False positives—alerts that flag legitimate transactions as suspicious—are one of the biggest operational drains in transaction monitoring. Industry benchmarks show that AML false positive rates typically range between 85% and 95%, meaning most compliance alerts do not represent genuine financial crime risk. The average investigation time per alert is 20-60 minutes.

For a mid-sized institution processing 50,000 alerts annually with a 95% false positive rate, this results in $1.2M to $2.3M annually spent on non-productive investigation work.

During vendor demos, press for:

- Benchmarks on their false positive rates

- How their platform supports tuning over time

- Built-in analytics that help identify which rules generate noise

- Mechanisms for ongoing model calibration (rules-based or AI-driven)

Look beyond basic accuracy metrics. PRAUC (Precision-Recall Area Under the Curve) is the superior metric for highly imbalanced datasets like AML. High PRAUC performance means investigators review fewer alerts but find more of value, and SAR conversion rates rise.

Data Quality, Coverage, and Update Frequency

A transaction monitoring platform is only as good as the data it analyzes and the watchlist data it incorporates. Poor data lineage—where the vendor cannot clearly explain the origin or update cycle of their data—is a material compliance risk.

Get specific answers on:

- How often watchlist data is updated

- What sources feed risk databases (OFAC, UN, EU, HMT, PEPs, adverse media)

- Whether data freshness is guaranteed by SLA

- Whether they can document data extraction and loading processes

The NYDFS Part 504 Final Rule mandates strict end-to-end testing and validation of data integrity, requiring annual board or senior officer certification. Vendors must support validation of data accuracy and complete data flows from source to automated monitoring systems.

Integration Architecture and API Capabilities

Transaction monitoring tools that operate in isolation from KYC data, CRM systems, core banking platforms, and payment processors produce alerts without context—making investigations slower and less accurate.

Evaluate whether vendors offer:

- Well-documented APIs with clear versioning

- Pre-built connectors for common platforms

- Documented integration timelines

- Support for real-time data feeds

Poor integration increases manual data reconciliation work and raises the risk of missing context—when transaction history is disconnected from customer risk profiles, investigators work blind. For fintechs and payments companies scaling quickly, extended implementation timelines compound that problem.

Scalability and Regulatory Adaptability

For growing fintechs and payments companies, the platform must handle increasing transaction volumes without degradation in alert quality or processing speed. Cloud-native architectures typically offer better scalability than on-premise legacy systems.

Request the following before signing:

- Performance benchmarks for high-volume processing

- SLA terms for alert processing speed

- Stress testing results

- Volume capacity limits

Equally important is regulatory adaptability. Ask vendors how quickly they updated their platforms when major regulatory changes occurred—FinCEN beneficial ownership rules, new FATF guidance, or virtual asset requirements. The FATF's June 2025 targeted update on Virtual Assets highlighted the need for Travel Rule compliance and monitoring of DeFi and unhosted wallets. Vendors who lag behind regulatory changes put their clients at exam risk.

Vendor Support Model, Implementation Track Record, and Roadmap

The vendor relationship does not end at contract signing. Implementation quality and post-sale support significantly affect program effectiveness.

Ask for:

- Client references from institutions with similar risk profiles

- Average implementation timelines

- Clarity on who owns ongoing configuration support (dedicated CSM vs. general support queue)

- Examples of how quickly they responded to regulatory changes

Ask vendors to walk through their product roadmap. Are AI/ML capabilities being actively developed? How do they incorporate regulatory feedback? A vendor who cannot articulate a clear development roadmap will likely fall behind as financial crime typologies and regulatory expectations shift—leaving clients to absorb the exam risk.

Total Cost of Ownership Beyond Licensing Fees

The upfront licensing cost is rarely the most significant expense. Factor in implementation costs, integration development hours, ongoing configuration and tuning labor, training, and the hidden cost of alert fatigue caused by a poorly calibrated system.

The total cost of financial crime compliance in the U.S. and Canada reached $61 billion in 2023, driven heavily by the labor costs of alert review. A cheaper platform that generates high false positive rates often ends up costing more when analyst time is quantified.

Build a total cost of ownership (TCO) model including:

- Licensing and subscription fees

- Implementation and integration costs

- Ongoing tuning and configuration labor

- Training and change management

- Cost of potential exam findings if the platform underperforms

Red Flags to Watch for During the Vendor Evaluation Process

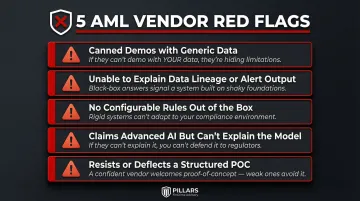

Specific warning signs should give compliance leaders pause during demos and RFP processes:

- Only shows canned demos with generic data: Ask to run a demo using your actual transaction types. A vendor who can't — or won't — likely doesn't understand your use case well enough to serve it.

- Can't explain data lineage or alert output structure: If they struggle to answer where their data comes from, how often it refreshes, or how alerts export, that signals weak data governance beneath the surface.

- No configurable rules out of the box: Platforms locked into static, pre-set scenarios can't adapt to your risk profile or emerging typologies. Flexibility isn't a luxury — it's a baseline requirement.

- Claims advanced AI but can't explain the model: Vendors who can't describe their model logic, training data, or false positive rates are selling a black box. The U.S. Treasury's December 2024 report on AI in Financial Services noted that black-box models present real explainability challenges — and regulators now expect institutions to document and justify their monitoring logic.

- Resists or deflects a structured POC: A vendor with a genuinely effective platform will welcome comparative testing with real or representative data. Pushback on a pilot is often a tell.

Spotting these red flags is one thing — structuring a POC rigorous enough to surface them is another. If your team doesn't have the internal capacity to design and run that process, an independent compliance advisor can own it directly.

How Pillars FinCrime Advisory Can Help

Pillars FinCrime Advisory is a CAMS-certified advisory firm led by Joshua Douglas, who brings 12+ years in financial crime compliance and hands-on experience designing, optimising, and auditing transaction monitoring programs across fintechs, payments companies, and financial institutions.

When evaluating AML software vendors, Pillars works alongside your team at every stage:

- Independent vendor assessments — Requirements matrices tied to your risk profile, structured vendor demos and RFPs, and comparative evaluations without vendor bias

- Program audits and gap analysis — Deficiencies identified before regulators do, mapped to FinCEN, FFIEC, and NYDFS standards

- Alert tuning and scenario optimisation — Improving alert quality and reducing false positive rates post-implementation

- SAR quality review — Ensuring suspicious activity reports meet regulatory expectations

- Regulatory exam preparation — Documentation, testing, and process validation that makes your AML/BSA program exam-ready

If you're mid-evaluation or starting from scratch, Pillars can help you avoid the common mistakes that lead to costly re-implementations — and build a program that holds up under regulatory scrutiny.

Conclusion

Effective AML transaction monitoring vendor selection demands a structured, risk-based evaluation — one that connects platform capabilities to your institution's actual transaction typologies, regulatory obligations, and operational capacity.

The right vendor is not the most popular one or the one with the most impressive demo—it's the one whose platform performs reliably against your risk profile, connects cleanly to your data pipelines and case management systems, and comes backed by a support model and roadmap that will hold up under regulatory scrutiny. Once you've made a selection, the work isn't done. Alert thresholds drift, typologies evolve, and regulators expect documented evidence that your program keeps pace. Build ongoing tuning into your operating model from day one.

A few markers that signal a vendor is genuinely fit for purpose:

- Detection logic that maps to your institution's specific customer base and transaction mix

- A model governance framework you can defend to examiners — not just slides from a sales deck

- Integration references from institutions with comparable risk profiles and data architectures

Frequently Asked Questions

What are the red flags in AML transaction monitoring?

Key red flags include structuring (transactions just below reporting thresholds), rapid movement of funds across accounts, transactions inconsistent with a customer's risk profile, and unusual geographic patterns. The specific red flags your system monitors should reflect your institution's customer base and risk appetite.

What are the four pillars of an AML program?

The four pillars are internal policies and controls, designation of a BSA/AML compliance officer, ongoing employee training, and independent testing/audit. Transaction monitoring sits within the first pillar and must be calibrated to support your program's risk-based approach.

What is the difference between rules-based and AI-based transaction monitoring?

Rules-based systems flag transactions that match predefined criteria (e.g., cash deposits exceeding a set threshold), while AI/ML-based systems learn from historical transaction data to detect anomalies and emerging patterns. Most modern platforms use a hybrid approach, and both models require ongoing tuning and human review to remain effective.

How do I reduce false positives in AML transaction monitoring?

Reducing false positives requires:

- Regular review and adjustment of alert scenarios

- Threshold calibration against actual transaction data

- Segmentation of customer risk tiers

- Behavioral analytics to filter out expected activity

This is an ongoing optimization process, not a one-time configuration.

How long does it typically take to implement AML transaction monitoring software?

Implementation timelines vary widely—from a few weeks for API-based or cloud-hosted platforms to 6-12+ months for complex enterprise deployments with deep core banking integrations. Integration complexity, data migration requirements, and internal IT capacity are the primary variables affecting timeline.

When should a financial institution reassess its AML transaction monitoring vendor?

Reassess your vendor when persistent high false positive rates resist tuning, when the platform fails to keep pace with regulatory changes, when it can't scale with transaction volume growth, when support quality deteriorates, or when a regulatory exam finding directly cites monitoring program deficiencies.